Pop-science is something of a wonder. Growing up in the 90s, Bill Nye was the face of science. In the 2000s, Malcolm Gladwell’s books made scientific results ever more approachable and showed the persistent demand for explanations of scientific results into modern times, the same as A Brief History of Time (1988), Cosmos (1980), and even The Origin of Species (1859) before it. Neil deGrasse Tyson rode the advent of social media to be the face of pop-science in the 2010s. Tyson’s success, in particular, set off a few waves in different directions. One wave went further into the pop side of pop-science, with fans following memes like Yeah, science bitch, inspiring accounts that generate and monetize the content. The uber-nerds bookmarked XKCD (before the feed existed) and built their own social media sites like LessWrong to slate their desire for more technical and data-driven discussions. The content creators took to YouTube to bring the technology to life, like this stunning case study of blue LEDs:

Another personal favorite, Asianometry, documents how history, geopolitics, and technology are intertwined. Business professors update their courses with new examples and phenomena to cover, merging neuroscience, psychology, and marketing. Bro science races through podcasts. Even throughout the Enlightenment and the industrial revolution, I am not sure science has ever had more content, more eyeballs, and more money to be made from explaining theory and data.

This ecosystem has hummed along through the replication crisis. If you have paid attention to science at all, this crisis is not news to you. Many social sciences (notably psychology and behavioral economics) are struggling to replicate some of the foundational research of their field. In the early 2010s, it was identified as a full-fledged crisis for the fields. To be clear, this is not about fraudulent papers. The social sciences are inherently difficult to study because people are difficult to study. People grow and change, and societies completely change. One way I think about the replication crisis is that it is about understanding the present prevalence and effect size of historical behavioral and social phenomena. The medium of the replication crisis is the same as the leading edge of any science:

statistics and letters to the editor in journals

the scientific news groups that study them

the niche internet haunts and watering holes for the professionals that care about understanding and implementing research

The experts in this space will flat out tell you most science is not written for a lay person to understand and is part of a larger conversation the lay person is not part of. Little of that translates to the realm of science as entertainment anyway. Its worst form is grifters spinning up debates that “Science is a liar sometimes”:

Ultimately, you are on your own to follow the space and interpret the results—or rather, determine which experts to listen to when they clash. The books are still for sale, though maybe they get a new edition with a defense of the work. Many (but not all) Wikipedia pages mention replication controversies now. The science-y social media accounts target what gets eyeballs and will continue to post whatever entertains people. Maybe you know some grad students in the field who will tell you where the scientific consensus stands. Maybe you are reading the writers you trust on Vox, LessWrong, or Astral Codex Ten who happen to cover the issue (or cover someone else covering the topic).

At best, when you encounter some cool behavioral research, you develop a habit to ask: does it replicate? Then you google “<social phenomenon> replication”, parse the results, and attempt to remember in the weeks and months after if that survived replication or if it was constrained at all. As someone with an engineering but not research background, I found this process all deeply tedious and draining—it’s far too easy for simple stories to travel and too hard to retain the nuance required for real understanding.

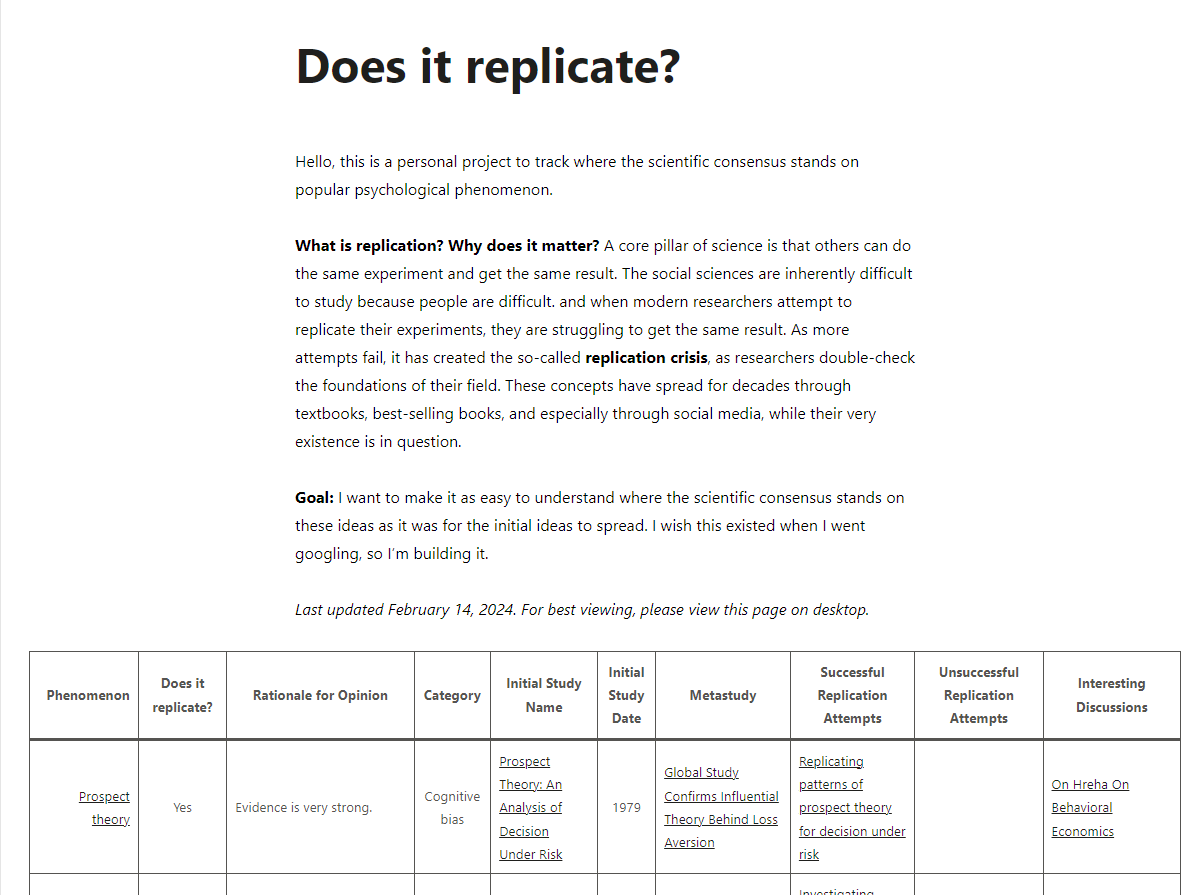

So I started writing down what I learned, from where, what I wanted to remember from all that reading, and thought it worthwhile to share to save others that time. This is replicateornot.com.

What is the goal?

Replicateornot.com provides a simple resource for lay people dedicated to tracking if and how well the most-discussed science concepts in books, marketing theory, and pop culture replicate so they can update their prior beliefs accordingly.

Why is it called ReplicateOrNot.com?

Part ode to HotOrNot.com, part something I could remember.

Who are you to comment on the scientific consensus if something is successfully replicating or not?

My friend with a degree in psychology: “just because you (Rob) can’t understand something doesn’t mean it’s bullshit. That would invalidate… well, most of science to be frank.”

This is truer than I want it to be. I do not pretend to be a part of the scientific debate; I am far downstream. I make no claim to truly understand the scientific consensus either, as I understand it happens as much in the hallways of research labs as the journals on the web. I am just writing down what I found, what I took from it, why I came to that conclusion, citing my sources, and jumping on the ego grenade that is Cunningham’s Law so anyone else interested in this can benefit.

Many of these conclusions are weakly held, and I welcome input from those more directly involved.

Will Replicateornot.com cover the whole replication crisis?

For now, that is not the goal. I just want to cover the stuff that is the most viral, that shows up on reddit and Twitter the most.

Why is Replicateornot.com so ugly?

While I am inspired by other bro-science fighters like Examine.com, I thought it best to go fast. When I saw another post praising simple-if-ugly designs, I decided to ship it and make it pretty and detailed if people like it.

What are your criteria for assessing what replicates and what does not?

I made a few broad categories drawn from how I saw the researchers discussing the implications of replication attempts. I am at heart an empiricist: I care what works. If new theories guide me to try something I wouldn’t have and it works, I am pleased because it works (or works better than it worked before). If a new paper shows the effect size for something isn’t as big as predicted but it works for me, I will keep doing it. If it provides a useful framework for understanding the world, I will continue to use that framework, knowing there are others that could also apply. While entire studies may not replicate exactly or generalize to the whole world, I want to emphasize both the parts that are still true and understand any proven limitations.

Should I assume the replication crisis is evidence academics are trying to lie to me?

No. The physical universe itself is pretty consistent except for all the times it’s not, and studying people is even more difficult. Academic fraud is a real issue but a separate one. The issues of replication tend to be related to the methodology or limitations of the theories developed in response to observed interactions.

Your beliefs are bad, and I want to correct them. How can I do so without hurting your feelings?

You are a saint. Please drop a note in the comments here, shoot me an email at main@replicateornot.com, or tag me on Twitter @replicateornot.